Week of 2021-08-23

Rigor and shared mental model space

Alex Komoroske has another great set of new cards on rigor and here’s a little riff on them. The thing that caught my attention -- and resonated with me -- was the notion of faux rigor. Where does it come from?

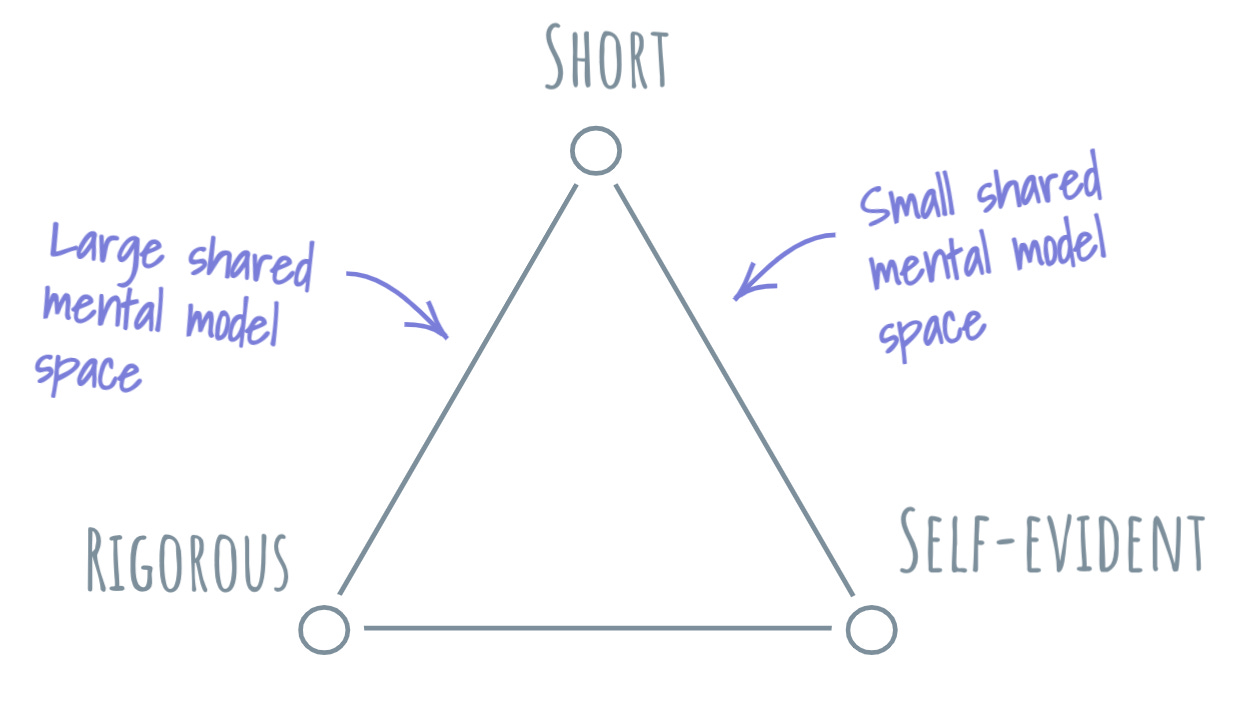

Alex’s excellent iron triangle of the argument trade-offs framing offers a hint. Time is the ever-present constraint, and that means that the Short corner tends to prevail. Given a 1-pager and a 30-pager, the choice is a no-brainer for a busy leader. So the battle for rigor now depends on the argument being self-evident. Here, I want to build the story around the concept of shared mental model space. A shared mental model space is the intersection of all mental models across all members of a team.

In a small, well-knit team that’s worked together for a long time, the shared mental model space is quite large. People speak in shorthand, and getting your teammate up to speed on a new idea is quite easy: they already reached most of the stepping stones that got you there. In this environment, we can still find rigorous arguments, because the Self-evident corner is satisfied by the expansive shared mental model space.

As the team grows larger or churns in membership, the shared mental model space shrinks. Shorthands start needing expansion, semantics -- definition, and arguments -- longer and longer builds. With a smaller shared mental model space, the argument needs to be more self-contained. Eventually, rigor is sacrificed to the lowest common denominator of mental models. In a limited shared mental model space, only the most basic short and self-evident arguments can be made. Value good. Enemy bad.

This spells trouble for larger teams or teams with high turnover. Through this lens, it’s easy to see how they would struggle to maintain a high level of rigor in the arguments that are being presented and evaluated within it. And as the level of rigor declines, so will the organization’s capacity to make sound strategic decisions. After faux rigor becomes the norm, this norm itself becomes a barrier that traps the organization in existential strategic myopia.

Especially in situations when a small organization begins to grow, it might be a good investment to consider how it will maintain a large shared mental model space as new people arrive and old guard retires. Otherwise, its growth will correlate with the decline of argument rigor within its ranks.

🔗 https://glazkov.com/2021/08/26/rigor-and-shared-mental-model-space/

Correlated compounding loops

A thing that became a bit more visible for me is this idea of correlated compounding loops. I used to picture feedback loops as these crisp, independent structures, and found that it is rarely the case in practice. More often than not, it feels like there are multiple compounding loops and some of them seem to influence, be influenced, or at least act in some sort of concordant dance with each other. In other words, they are correlated. Such correlation can be negative or positive.

Like any complexity lens, this is a shorthand. When we see correlated compounding loops, we are capturing glimpses of some underlying forces, and as it happens with many complex adaptive systems, we don’t yet understand its nature. All we can say is that we’re observing a bunch of correlated ones. To quickly conjure up an example, think of the many things impacted by the compounding loop of infections during the pandemic.

The thing that makes this shorthand even more useful is that we can now imagine a continuum of correlation between two compounding loops with two completely uncorrelated loops at one extreme and them coming together in perfect union at the other. Now we can look at a system and make some guesses about the correlation of compounding loops within it.

It seems that there will be more correlated compounding loops in systems that are more complex. In other words, the more connected the system is, the less likely we are to find completely uncorrelated compounding loops. To some degree, everything influences everything.

There are some profound implications in this thought experiment. If this hypothesis is true, the truly strong and durable compounding loops will be incredibly rare in highly connected systems. If everything influences everything, every compounding loop has high variability, which weakens them all, asymptotically approaching perfect dynamic equilibrium. And every new breakthrough -- a discovery of a novel compounding loop -- will just teach the system how to correlate it with other bits of the system. In a well-connected system, the true scarce resource is a non-correlated compounding loop.

🔗 https://glazkov.com/2021/08/25/correlated-compounding-loops/

Settled and “not settled yet” framings

I was working on decision-making frameworks this week and had a pretty insightful conversation with a colleague, using the Cynefin framework as context. Here’s a story that popped out of it.

Many (most?) challenging decisions-requiring situations seem to reside in this liminal space between Complex and Complicated quadrants. We humans generally dislike the unpredictability of the complex space, so we invest a lot of time and energy into trying to shift the context: try to turn a complex situation into a complicated one. Especially in today’s business environments, we have a crapload of tools (processes, practices, metrics, etc.) to tackle complicated situations, and it just feels right to quickly get to the point where a problem becomes solvable. This property of solvability is something that is acquired as a result of transitioning through the Complex-Complicated liminal space. I use the word “framing” to describe this transition: we take a phenomenon that looks fuzzy and weird, then we frame it in terms of some well-established metaphors. Once framed, the phenomenon snaps into shape: it becomes a problem. Once a problem exists, it can be solved using those nifty business tools.

This transformation is lossy. Some subtle parts of the phenomenon’s full complexity become invisible once it becomes “the problem.” If I am lucky with my framing, these subtle parts will remain irrelevant. In the less happy case, the subtle parts will continue influencing the visible parts -- the ones we see as “the problem.” We usually call these side effects. With side effects, the problem will appear to be resisting our attempts to solve it. No matter how much we try, our solutions will create new problems, new side effects to worry about.

In this story, it’s pretty evident that effective framing is key to making a successful Complex-Complicated transition. Further, it’s also likely that framing is an iterative process: once we encounter side effects, we are better off recognizing that what we believe is “the problem” might be a result of ineffective framing -- and shifting back to complex space to find a more effective one.

My colleague had this really neat idea that, given the multitudes of framings and problems we collectively track in our daily lives, it might be worth tagging the problems according to the experienced effectiveness of their framing. If a problem is teeming with side-effects, the framing has “not settled yet” -- it’s the best framing we’ve got, but approach it lightly, do seek out novel ways to reframe the phenomenon. Decisions based on this framing are unlikely to stick or bear fruit. Conversely, settled framings are the ones that we didn’t have to adjust in a while and are consistently allowing us to produce side-effect free results. Here, decisions can be proceduralized and turned into best practices.