Week of 2023-01-09

Where I talk about the wanderer, a tiny LLM-based experiment to try something other than the usual “chat with <foo>” scenarios. Also, “no ship ums”, a fun new name for developer environments with no direct venue to ship out of. Will it catch on?

Wanderer

Here’s another little experiment I built over the break. Like pretty much everyone these days, I am fascinated by the potential of applying large language models (LLMs) to generative use cases. So I wanted to see what’s what. As my target, I picked a fairly straightforward use case: AI as the narrator.

While I share in the excitement around applying AIs as chatbots and pure generators of content, I also understand that predictive models, no matter how sophisticated, will have a hard time crossing the chasm of the uncanny valley – especially when pushed to its limits. As a fellow holder of a predictive mental model, I am rooting for them, but I also know how long this road is going to be. We’ll be in this hollow of facts subtly mixed with cheerful hallucination for a while.

Instead, I wanted to try limiting the scope of where LLM predictions can wander. Think of it as putting bounds around some text that I (the user) deem as a potential source of insights and asking the AI to only play within these bounds. Kind of like “hey, I know you’ve read a lot and can talk about nearly anything, but right now, I’d like for you to focus: use only these passages from War and Peace for answering my questions”.

In this setup, the job of an LLM would be to act as a sort of well… a language model! This is a terrible analogy, but until I have a better one, think of it being a speech center of a human brain: it possesses incredible capabilities, but also, when detached from the rest of the prefrontal cortex, produces conditions where we humans just babble words. The passages from War and Peace (or any other text) act as a prefrontal cortex, grounding the speech center by attaching it to the specific context in which it offers a narration.

Lucky for me, this is a well-known pattern in current LLM applications. So, armed with a Jupyter cookbook, I set off on my adventure.

A key challenge that this cookbook overcomes is the limited context window that modern LLM applications have. For example, GPT-3 is only capable of just over 4000 tokens (or pieces of words), which means that I can’t simply stuff all of my writings into context. I have to be selective. I have to pick the right chunks out of the larger corpus to create the grounding corpus for the narrator. So, for example, if I have questions about Field Marshal Kutuzov eating chicken at Borodino, yet I give my narrator only the passages from Natasha's first ball, I will get unsatisfying results.

I won’t go into technical details of how that’s done in this post (see the cookbook for the general approach), but suffice to say, I was very impressed with the outcomes. After I had the code running (which, by the way, was right at the deca-LOC boundary), my queries yielded reasonable results with minimal hallucinations. When in the stance of a narrator, LLM’s hallucinations look somewhat different: instead of presenting wrong facts, the narrator sometimes elides important details or overemphasizes irrelevant bits.

Now that I had a working “ask ‘What Dimitri Learned’ a question” code going, I wondered if the experience could be more proactive. What if I don’t have a question? What if I didn’t know what to ask? What if I didn’t want to type, and just wanted to click around? And so the Wanderer was born.

Here’s how it works. When you first visit wanderer.glazkov.com, it will pull a few random chunks of content from the whole corpus of my writings, and ask the LLM to list some interesting key concepts from these chunks. Here, I am asking the narrator not to summarize or answer a specific question, but rather discern and list out the concepts that were mentioned in the context.

Then, I turn this list of concepts into a list of links. Intuitively, if the narrator picked out a concept from my writings, there must be some more text in my writings that elaborates on this concept. So if you click this link, you are asking the wanderer to collect all related chunks of text and then have the narrator describe the concept.

Once you’ve read the description, provided by Wanderer, you may want to explore related concepts. Again, I rely on the narrator to list some interesting key concepts related to the concept. And so it goes.

When browsing this site (or “wandering”, obvs), it may feel like there’s a structure, a taxonomy of sorts that is maintained somewhere in a database. One might even perceive it as an elaborate graph structure of interlinked concepts. None of that exists. The graph is entirely ephemeral, conjured out of thin air by the narrating LLM, disappearing as soon as we leave the page. In fact, even refreshing the page may give you a different take: a different description of the concept or a different list of related concepts. And yes, it may lead you into dead ends: a concept that the narrator discerned, yet is failing to describe given the context.

The overall experience is surreal and – at least for me – highly generative. After wandering around for a while, it almost feels like I am interacting with a muse that is remixing my writing in odd, unpredictable, and wonderful ways. I find that I use the Wanderer for inspiration quite a bit. What concepts will it dig up next? What weird folds of logic will be unearthed with the next click? And every “hmm, that’s interesting” is a spark for new ideas, new connections that I haven’t considered before.

🔗 https://glazkov.com/2023/01/08/wanderer/

No ship ums

I’ve been thinking about the path that software dandelions take toward becoming elephants, and this really interesting framing developed in a conversation with my friends.

Software dandelions are tiny bits of software we write to prototype our ideas. They might be as small as a few lines of code or deca-LOCs, yet they capture the essence of some unique thought that we try to present to others. If, in a highly unlikely event, this dandelion survives this contact, I am usually encouraged to tweak it, grow it, incorporate insights. Through this process, the dandelion software becomes more and more elephant-like.

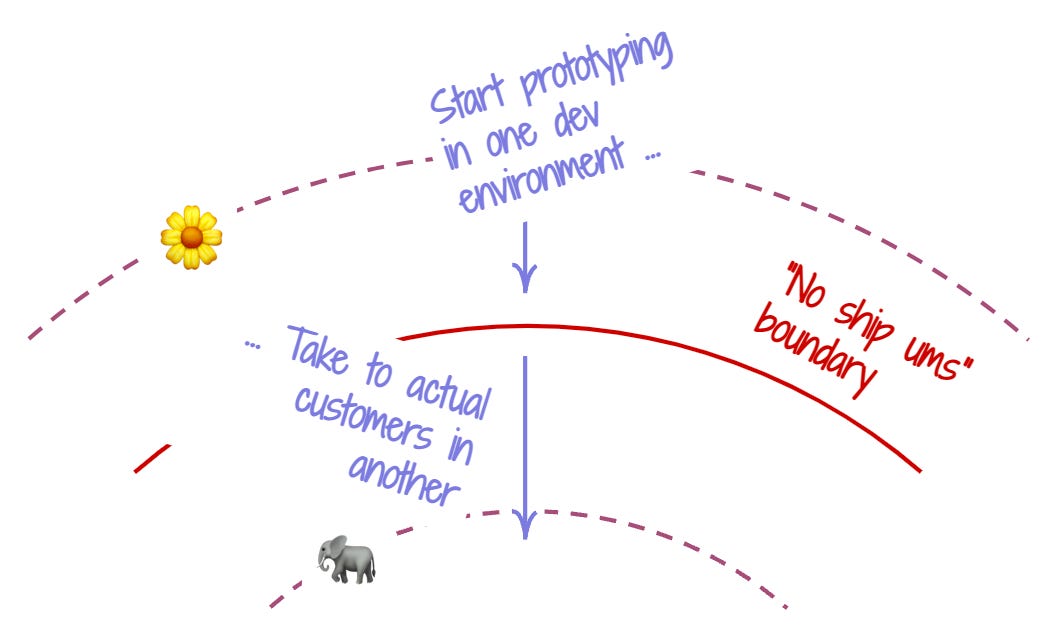

If I imagine idea pace layers (the concept from slide 55 on in glazkov.com/dandelions-and-elephants-deck) , and draw a line from the outermost, the full-dandelion meme layers into the innermost, absolute elephant ones, this line becomes the course of the idea maturity progression. As my dinky software traverses this course, it encounters a bunch of obstacles.

As I was looking at this progression, I realized that the environment in which I develop this software may impose constraints on how far my software can travel along this course – before it needs to switch to another environment.

One such constraint is what I called the “No ship ums”, kind of like those tiny bugs (yes, I am continuing that r/K-selection naming schtick). When in a development environment like that, I can develop my idea and make it work, and even share it with others, but I can’t actually ship it to potential users. A typical REPL tool is a good example of such environments, with Jupyter/Colab and Observable notebooks being notable examples. While I could potentially build a working prototype using them, I would be hard pressed to treat them as something I would ship to my potential customers as-is.

Why would one build such a boundary into an environment? There are several reasons. When shipping is out of the question, many of the critical requirements (like security and privacy, for example) are less pronounced. An environment can be more relaxed and better tailored for wild experimentation.

More interestingly, such a barrier can be a strategically useful selection tool for dandelions. When I build something in a notebook, I might be excited that it works – yay! To get to the next stage of actually seeing it in the hands of a customer, the barrier offers the opportunity to pause. As the next step, I will need to port this work into another environment. Will it be worth my time? Is my commitment to the idea strong enough to put in the extra work?

On the other hand, there’s definitely folks that would look at the paragraph above and say: “are you crazy?!” Putting a speed bump of this sort might appear like the worst possible move. If we are to help developers reach for new, unexplored places, why would we want to add extra selection pressure? My favorite example here is Repl.it’s “Always On” switch. Like where this experiment is going? Just flip this switch and make it real.

So… who is right? My sense is that the answer depends on the context of what we’re trying to do. Think of the “no ship ums” as a selection pressure ratcheting tool. Anticipate hordes of developers entering your environment and launching billions of tiny gnats? Consider applying it. Unsure about the interest and worry about growth? Maybe try to avoid no ship ums.